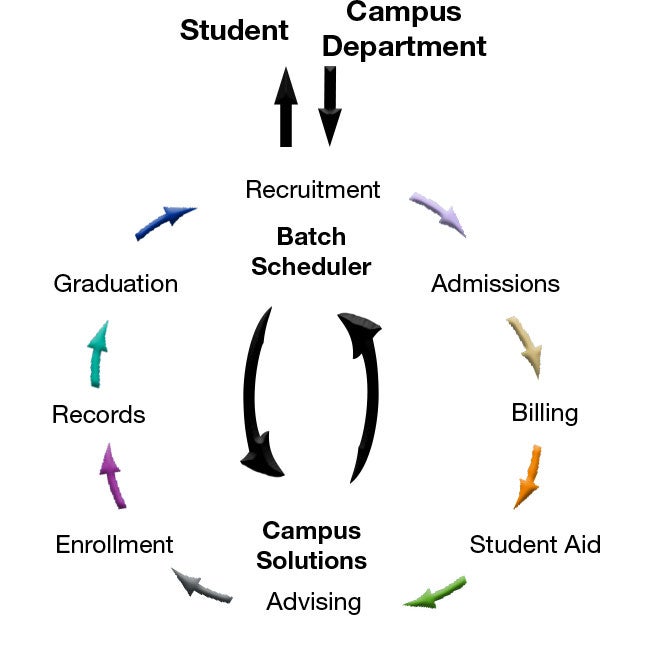

(For example, see Lambda architecture.) Batch processing typically leads to further interactive exploration, provides the modeling-ready data for machine learning, or writes the data to a data store that is optimized for analytics and visualization. In a big data context, batch processing may operate over very large data sets, where the computation takes significant time. The processing may include multiple iterative steps before the transformed results are loaded into an analytical data store, which can be queried by analytics and reporting components.įor example, the logs from a web server might be copied to a folder and then processed overnight to generate daily reports of web activity.īatch processing is used in a variety of scenarios, from simple data transformations to a more complete ETL (extract-transform-load) pipeline. The data is then processed in-place by a parallelized job, which can also be initiated by the orchestration workflow. In this scenario, the source data is loaded into data storage, either by the source application itself or by an orchestration workflow. A PDF version of the user manual available to download here.ĭetails from Divergent on the latest 1.1.2 update:ĮditReady 1.1.2 contains a number of fixes and enhancements and is recommended for all users.A common big data scenario is batch processing of data at rest. You can find out more about EditReady on the Divergent media website. Be aware that the application of a LUT will slow down the conversion process. This is useful if you want to shoot S-log2 but hand off rushes that have a more graded look to clients for inspection. Divergent claim EditReady performs faster conversions than major rivalsĮditReady also has a useful H.264 conversion option that allows you to quickly generate files for web.Īnother nice feature is the ability to batch apply a lookup table (LUT) in 3DL and Cube formats to the images. In practice it was fast enough for my needs. Divergent claim the conversion to be faster than many of their rivals but I haven’t had a chance to test this scientifically.

The conversion process is pretty speedy although for large batches you will still have plenty of time to go and get a cup of tea while converting. ProRes flavours include ProRes 422 LT, 422 Proxy, 422, 422 HQ and 4444. It offers batch conversion of XAVC-S and many other popular codecs (including Canon C300 MXF and GoPro files) into Apple ProRes or DNxHD and importantly keeps the a7S time codes intact. At $49.99 it is considerably cheaper than Catalyst Prepare and has a simple and intuitive interface that allows you to browse entire folders and make selections quickly. Obviously you can import the footage into a newer edit package like Premiere and then export it with a new timecode as a ProRes file, but this takes time and is a real pain with large amounts of footage.Įnter EditReady from the nice folks at Divergent media. The more expensive Catalyst Prepare does offer batch conversions as well as some very nice image control options, but costs $199.95. Sony’s own low cost Catalyst Browse software will convert files but only one at a time. This might not matter to the casual user but in broadcast or long form documentary this can be critical. There are several programs out there that will batch convert XAVC-S to Apple ProRes or DNxHD, but until now I haven’t found an inexpensive way to do so and preserve timecode. The conventional solution in such cases is to convert the footage into something more editable. If you shoot with a Sony a7S on a regular basis and hand off your material to be edited by someone else, then you have probably come across this problem: The wonderful internal XAVC-S codec doesn’t play nice with older editing systems – especially Final Cut Pro 7 and older versions of AVID.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed